In which scenarios is a Confusion Matrix most useful for evaluating machine learning models, and why?

Answer

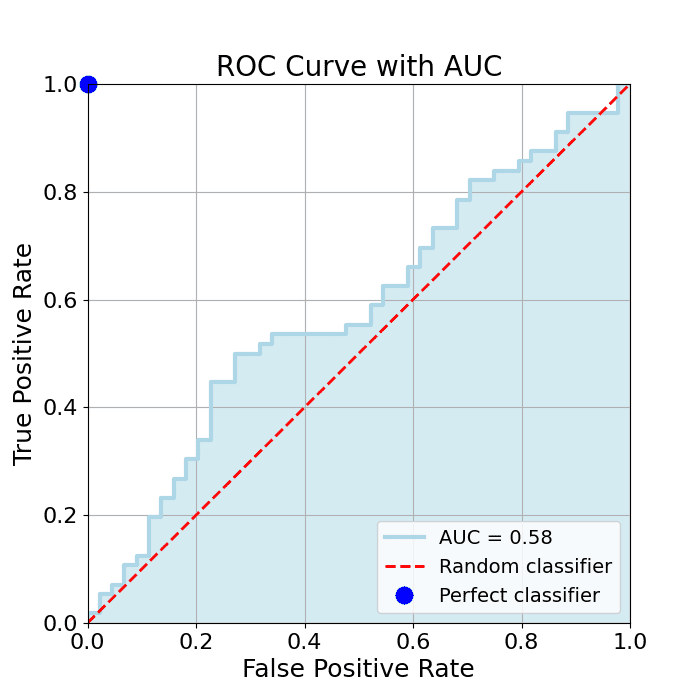

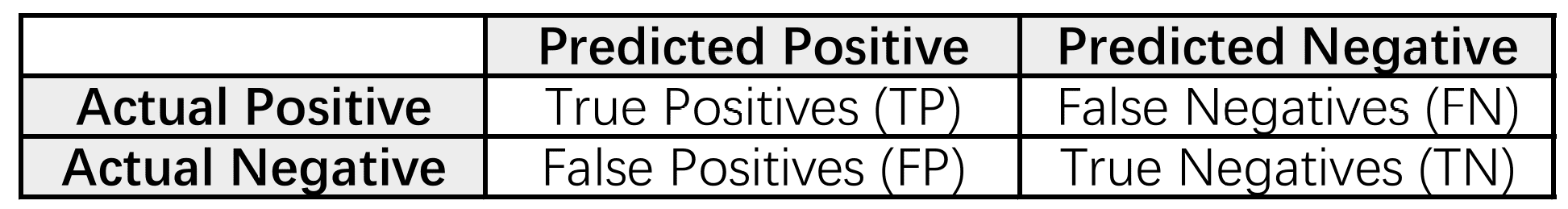

A Confusion Matrix is a table that visualizes the performance of a classification model by comparing the predicted and actual class labels. It displays the counts of True Positives (correctly predicted positives), True Negatives (correctly predicted negatives), False Positives (incorrectly predicted positives), and False Negatives (incorrectly predicted negatives). While its form is simple, it becomes indispensable whenever you need more insight than overall accuracy. Below are the key scenarios where a confusion matrix shines.

(1) Imbalanced Datasets: Reveals if the minority class is being predicted well, unlike overall accuracy.

(2) Understanding Error Types: Shows True Positives, True Negatives, False Positives, and False Negatives, which is crucial when different errors have different costs (e.g., medical tests, fraud detection).

(3) Multi-Class Classification: Identifies which specific classes are being confused.

(4) Comparing Models: A detailed comparison of model strengths and weaknesses beyond overall accuracy.

Here is an example binary class Confusion Matrix.