What is Gradient Descent in machine learning?

Answer

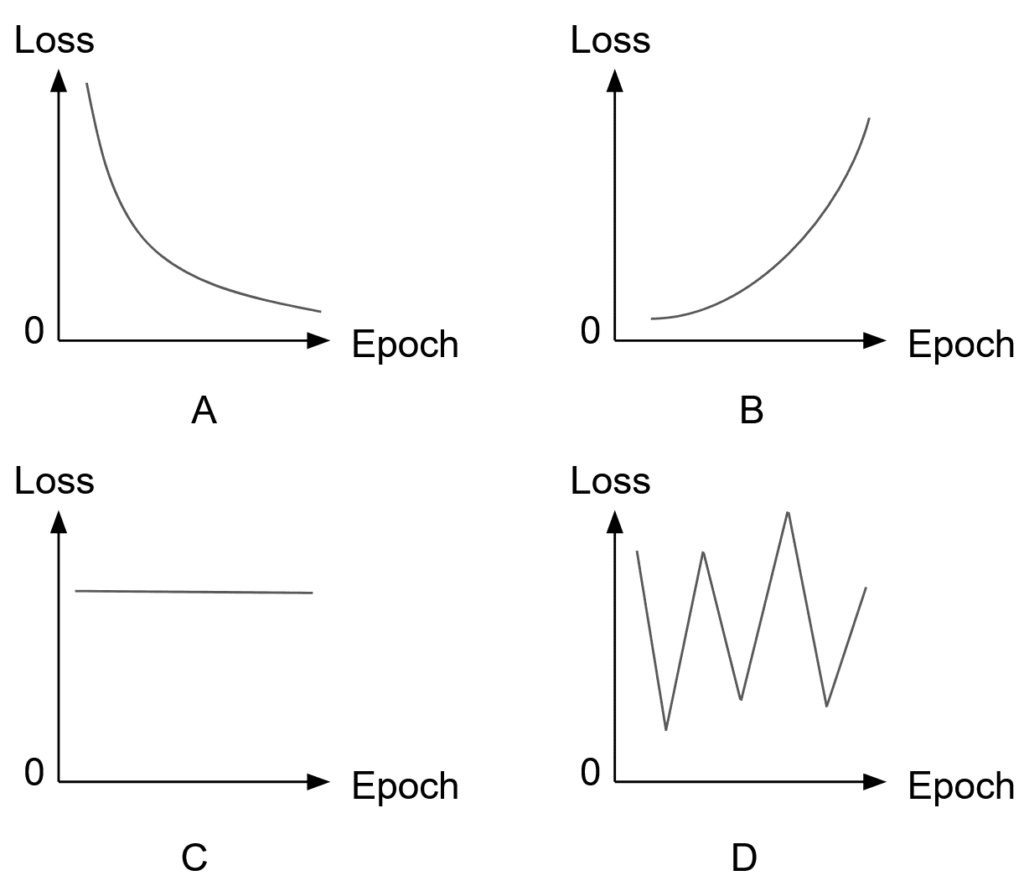

Gradient descent is an iterative optimization algorithm used to minimize a function, most commonly a cost or loss function in machine learning, by moving step-by-step in the direction of the steepest descent (i.e., opposite to the gradient).

In each iteration, the algorithm computes the gradient of the function with respect to its parameters, then updates the parameters by subtracting a fraction (the learning rate) of this gradient.

This process is repeated until the function converges to a minimum (which, for convex functions, is the global minimum) or until the updates become negligibly small.

The update rule for Gradient descent:

where: represents the parameters being optimized (for example, the weights in a model).

represents the learning_rate.

is the gradient of the cost function

with respect to

.