Please compare focal loss and weighted cross-entropy.

Answer

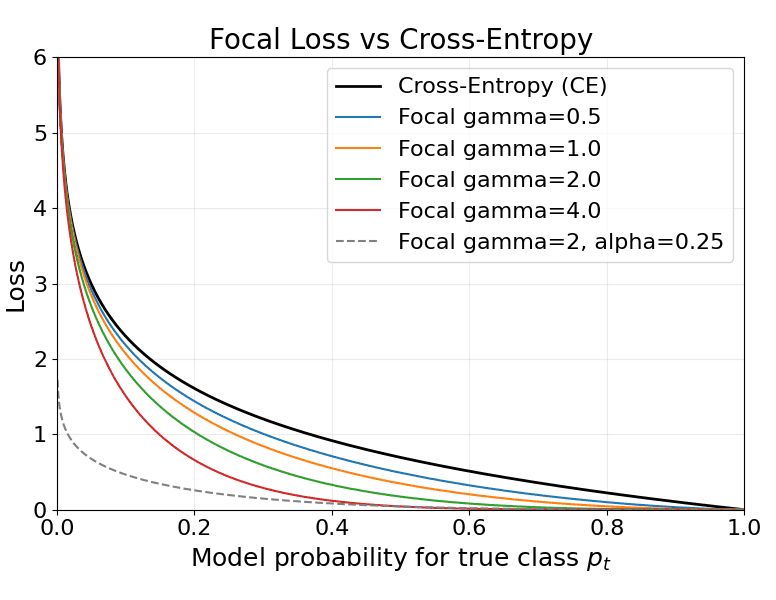

Weighted Cross-Entropy (WCE) rescales loss by class to correct prior imbalance and is simple and robust for noisy labels; Focal Loss (FL) multiplies cross-entropy by a difficulty-dependent factor to suppress easy-example gradients and focus learning on hard examples, making it preferable when many easy negatives overwhelm training but requiring careful tuning to avoid amplifying label noise.

Where: is the model probability for the ground-truth class;

is the per-class weight for class t.

Where: is the model probability for the ground-truth class;

is the optional per-class weight for class t;

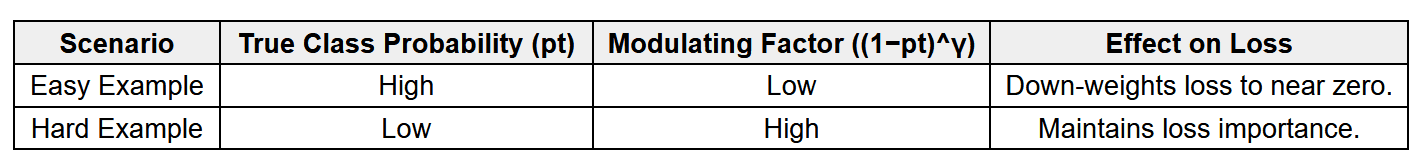

is the focusing parameter that down-weights easy examples.

Here is a table to compare focal loss and weighted cross-entropy.

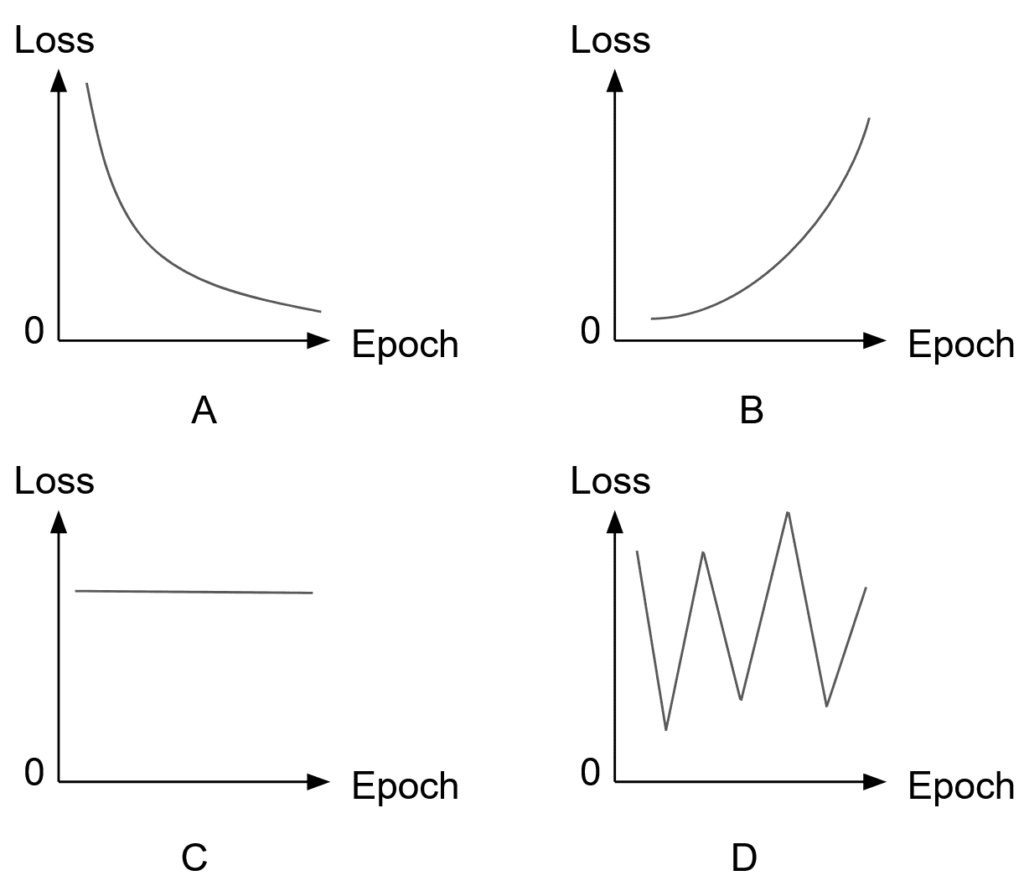

The figure below compares Cross-Entropy, Weighted Cross-Entropy, and Focal Loss.