What is focal loss, and why does it help with class imbalance?

Answer

Focal loss augments cross-entropy with a modulating term and an optional balancing weight

to suppress gradients from easy, majority-class examples and amplify learning from hard or minority-class examples, improving performance in severe class-imbalance settings when hyperparameters are properly tuned.

(1) Focal loss formula:

Where: is the model probability for the ground-truth class;

is the focusing parameter that down-weights easy examples;

is an optional class-balancing weight for class t.

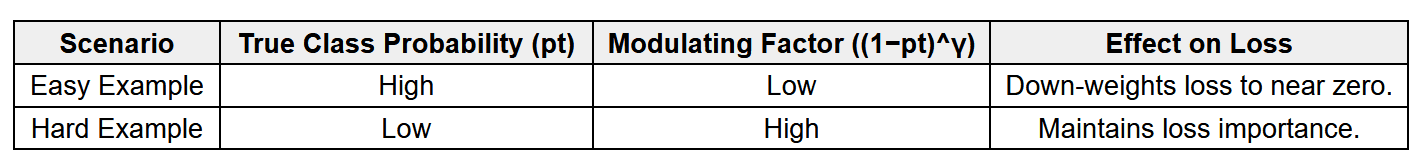

(2) Modulation: The factor reduces loss from well-classified (high-confidence) examples, concentrating gradients on hard / low-confidence examples.

(3) Class imbalance effect:

In cross-entropy, abundant, easy negatives still produce a large total gradient, dominating learning.

Focal loss down-weights those contributions, ensuring rare/difficult samples have a stronger influence.

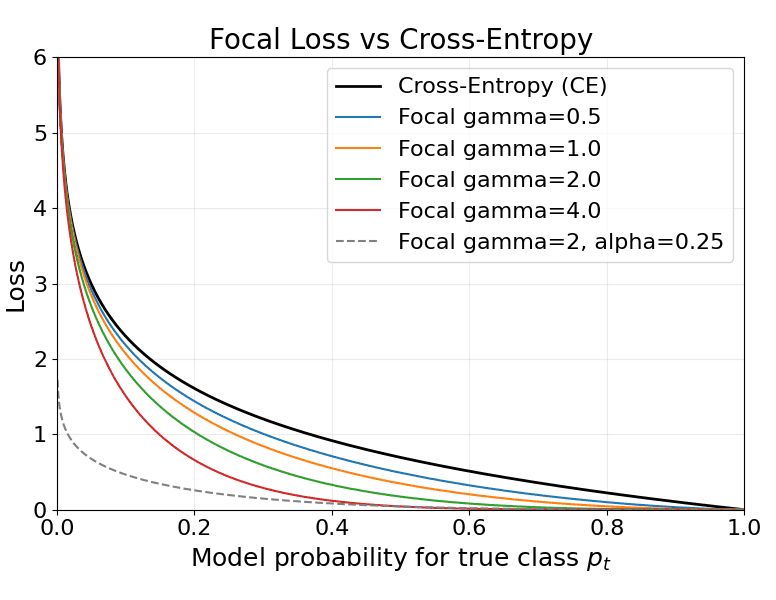

The plot below shows cross-entropy and focal-loss curves for several values and an example

.

Leave a Reply