Why use non-linear activation functions in neural networks in machine learning, and what limitations would a network face if only linear activation functions were used?

Answer

The benefits of using non-linear activation functions in neural networks are as follows:

(1) Introduce Non-Linearity: Enable learning complex patterns in data.

(2) Model Complexity: Allow approximation of any continuous function.

(3) Enable Multiple Layers to Add Power: Enable multiple layers to build complex, abstract representations rather than simple linear mappings. Stacking multiple layers with only linear activations collapses into an equivalent single linear transformation; depth would confer no additional modeling capacity.

The limitations of only linear activations are as follows:

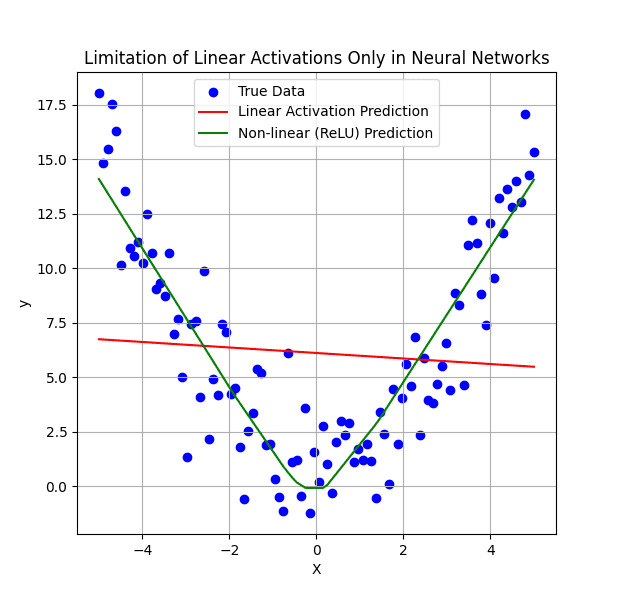

(1) No Depth Advantage: Any multilayer network collapses to a single-layer linear model, so adding layers does not increase modeling power. Acts as a single linear regression, regardless of depth.

(2) Inability to Learn Non‑Linear Boundaries: Only learn linearly separable data. Tasks requiring non‑linear decision boundaries become impossible.

The following example shows the limitation of using linear activations only in neural networks.

Leave a Reply