What are the common strategies for Hyperparameter Tuning in deep learning?

Answer

Hyperparameter tuning in deep learning is the process of optimizing the configuration settings that control the learning process.

(1) Manual/Heuristic Search: Start with values from prior work or common practice and iteratively adjust based on validation performance.

(2) Grid Search: Exhaustively evaluates all combinations over a predefined, discrete grid of hyperparameter values; simple but scales poorly with dimensionality.

(3) Random Search: Randomly sampling hyperparameter values from predefined ranges.

(4) Bayesian Optimization: Using probabilistic models to intelligently suggest the next set of hyperparameters to try, balancing exploration and exploitation.

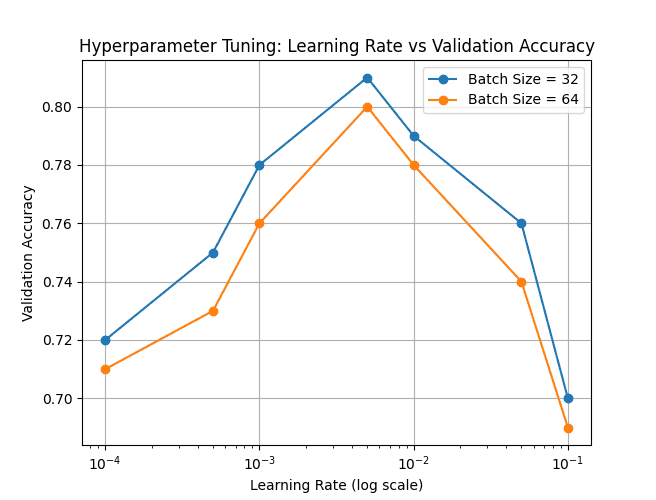

The below plot illustrates how validation accuracy varies with different learning rates (on a log scale) for two batch-size settings (32 and 64).

Leave a Reply