What is the ROC Curve, and how is it plotted?

Answer

The ROC (Receiver Operating Characteristic) curve is a graphical representation used to evaluate the performance of a binary classification model by comparing its True Positive Rate against its False Positive Rate at various threshold settings.

Key Concepts:

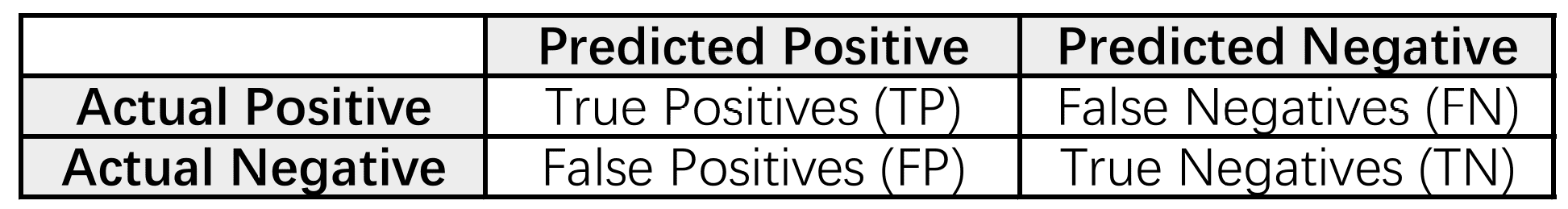

True Positive Rate (TPR): Also called sensitivity or recall, it measures the proportion of actual positives correctly identified.

False Positive Rate (FPR): The proportion of negatives incorrectly classified as positive.

Thresholds: Classification models output scores (often probabilities). A threshold determines the cutoff for labeling a prediction as positive or negative. The ROC curve is built by varying this threshold.

Steps to Plot the ROC Curve:

Train a Model: Train the binary classification model on the labelled dataset.

Generate Probabilities: Instead of predicting class labels directly, generate probability scores for the positive class.

Calculate TPR and FPR: Calculate the TPR and FPR for various threshold values

Plot the Curve: Plot the TPR against the FPR for each threshold, creating the ROC curve.

In an ROC curve:

The x-axis shows FPR (1 – Specificity)

The y-axis shows TPR (Sensitivity or Recall).

Each point represents a TPR/FPR pair for a specific threshold.