Can you explain what a fully connected layer is?

Answer

A Fully Connected (FC) Layer, or Dense Layer, is one where every neuron connects to all neurons in the previous layer. It computes a weighted sum of inputs, adds a bias, and applies an activation function to introduce non-linearity. This allows the network to learn complex feature combinations.

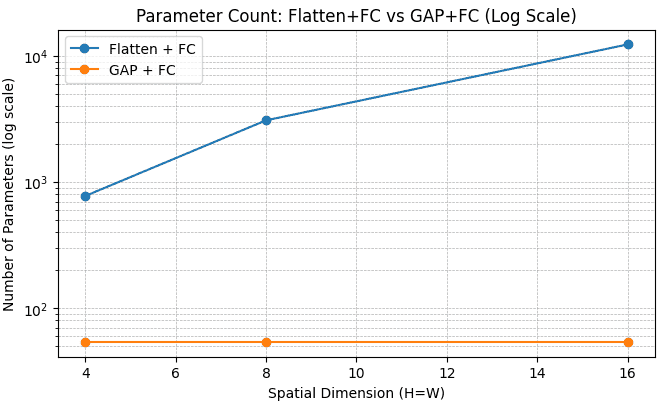

FC layers learn complex combinations of features but can be parameter-heavy and lose spatial context while flattening the feature maps.

Global Average Pooling (GAP) summarizes each feature map into a single value, reducing dimensionality and improving spatial robustness with no added parameters.

GAP followed by a small FC layer, is often used to replace the flatten operation with a large FC layer at the end of Convolutional Neural Networks (CNNs) for classification tasks.

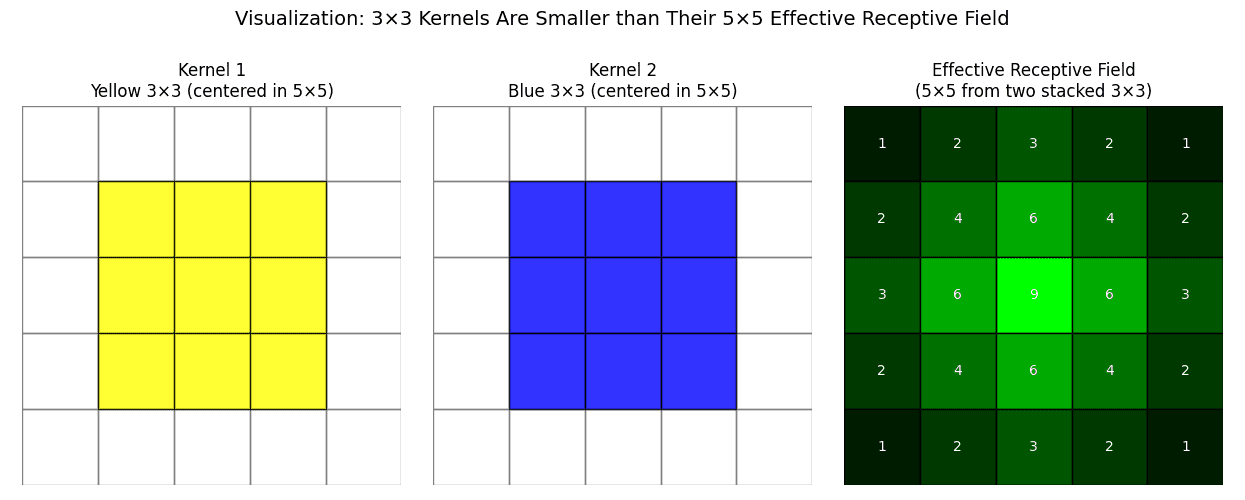

The image below shows examples of parameter comparisons between using Flatten + FC and GAP + FC. There is a total of 6 classes and 8 channels.