Please explain the benefits and drawbacks of random forest.

Answer

Random Forest is a powerful ensemble method that reduces overfitting and improves predictive accuracy by combining many decision trees. However, it trades interpretability and computational efficiency for these benefits and may require careful tuning when dealing with large, imbalanced, or sparse datasets.

Benefits of random forest:

(1) Reduces Overfitting: Aggregating many trees lowers variance.

(2) Robust to Noise and Outliers: Less sensitive to anomalous data.

(3) Handles High Dimensionality: Works well with many input features.

(4) Estimates Feature Importance: Helps identify influential variables.

(5) Built-in Bagging: Bootstrap sampling improves generalization.

Drawbacks of random forest:

(1) Less Interpretability: Hard to visualize or explain compared to a single decision tree.

(2) Computational Cost: Training and prediction can be slower with many trees.

(3) Memory Usage: Large forests can consume significant resources.

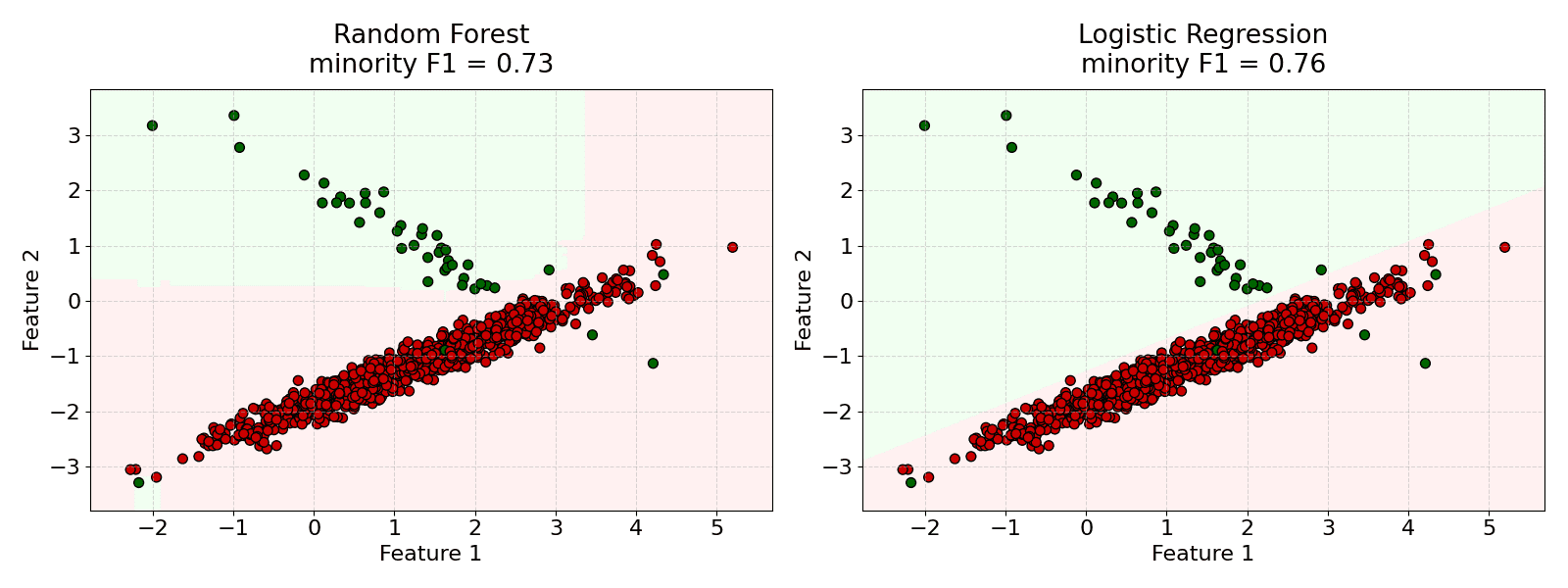

(4) Biased with Imbalanced Data: Class imbalance can lead to biased predictions.

(5) Not Always Optimal for Sparse Data: May underperform compared to other algorithms on very sparse datasets.

The example below demonstrates that the random forest sometimes underperforms on the imbalanced dataset.