What are the key advantages of using small convolutional kernels, such as 3×3, over utilizing a few larger kernels in deep learning architectures?

Answer

Using small convolutional kernels instead of a few larger kernels offers several significant advantages in deep learning architectures:

(1) Deeper Networks & More Non-Linearity: Stacking multiple 3×3 layers (e.g., three 3×3 layers) allows for a deeper network with more non-linear activation functions compared to a single large kernel.

(2) Reduced Parameters: Multiple small kernels can achieve the same receptive field as a larger one, but with fewer parameters.

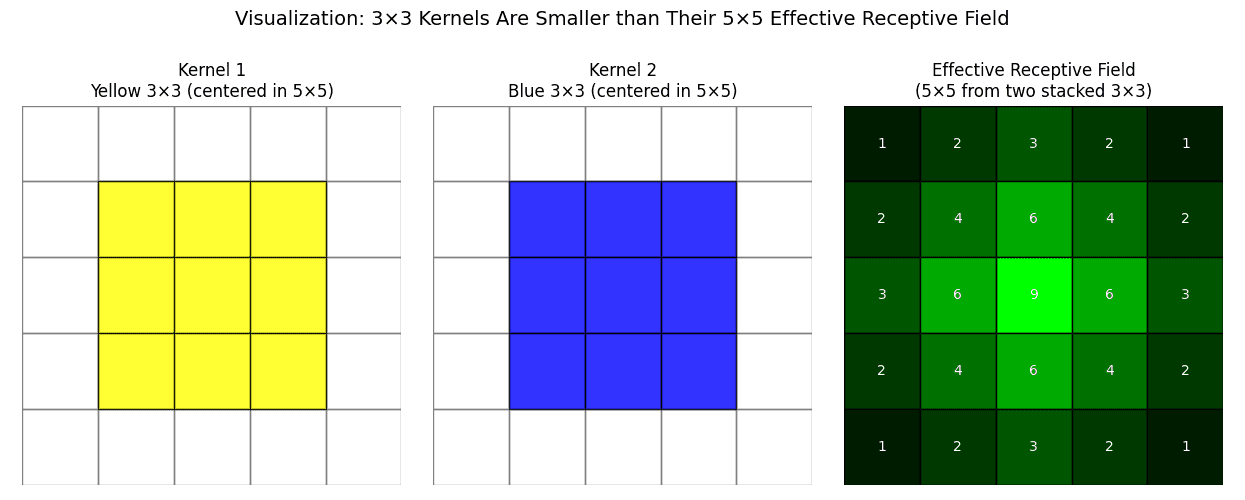

Example: Two stacked 3×3 layers ( total parameters) have the same receptive field as a 5×5 layer (

total parameters) but fewer parameters.

(3) Computational Efficiency: Fewer parameters in smaller kernels generally lead to lower computation costs during training and inference.

(4) Gradual Receptive Field Expansion: Successive 3×3 convolutions progressively build a larger receptive field while maintaining fine detail. (3×3 filters focus on local detail capture with pixel neighborhoods, ideal for textures or edges.)

Leave a Reply