How do Convolutional Neural Networks achieve parameter sharing? Why is it beneficial?

Answer

Convolutional Neural Networks (CNNs) share parameters by using the same convolutional filter across different spatial locations, enabling them to learn location-independent features efficiently with fewer parameters and better generalization.

How CNNs Achieve Parameter Sharing:

(1) Convolutional Filters/Kernels: A small matrix of learnable weights (the filter) is defined.

(2) Sliding Window Operation: This filter slides across the entire input image (or feature map).

(3) Weight Reuse: The same weights within that filter are used to compute outputs at every spatial location where the filter is applied.

Why Parameter Sharing is Beneficial:

(1) Reduced Parameters: Significantly fewer learnable parameters compared to fully connected networks.

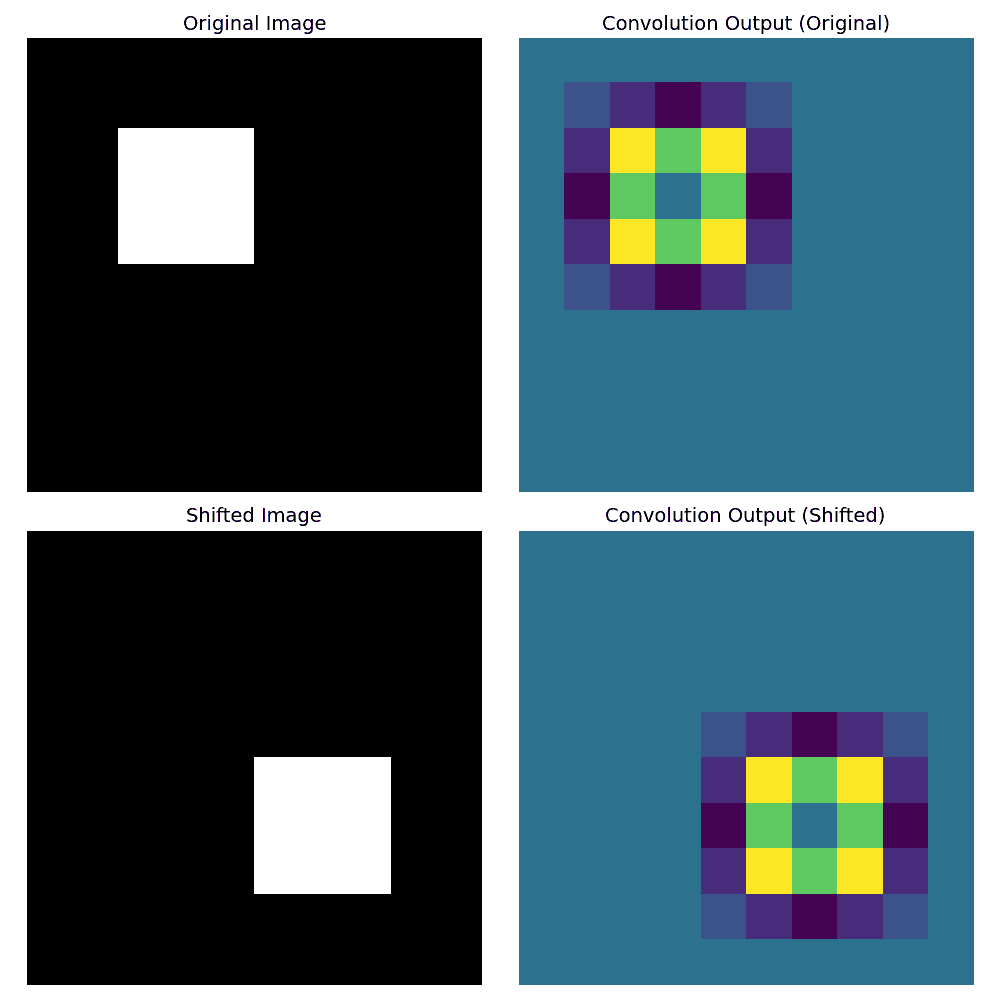

(2) Translation equivariance: Detects features regardless of their position in the image.

The following example demonstrates translation equivariance using a CNN-like convolution with a shared filter.

(3) Improved Generalization: Less prone to overfitting due to fewer parameters.

(4) Computational Efficiency: Faster training and inference.

Leave a Reply