What is a Multi-Layer Perceptron (MLP)? How does it overcome Perceptron limitations?

Answer

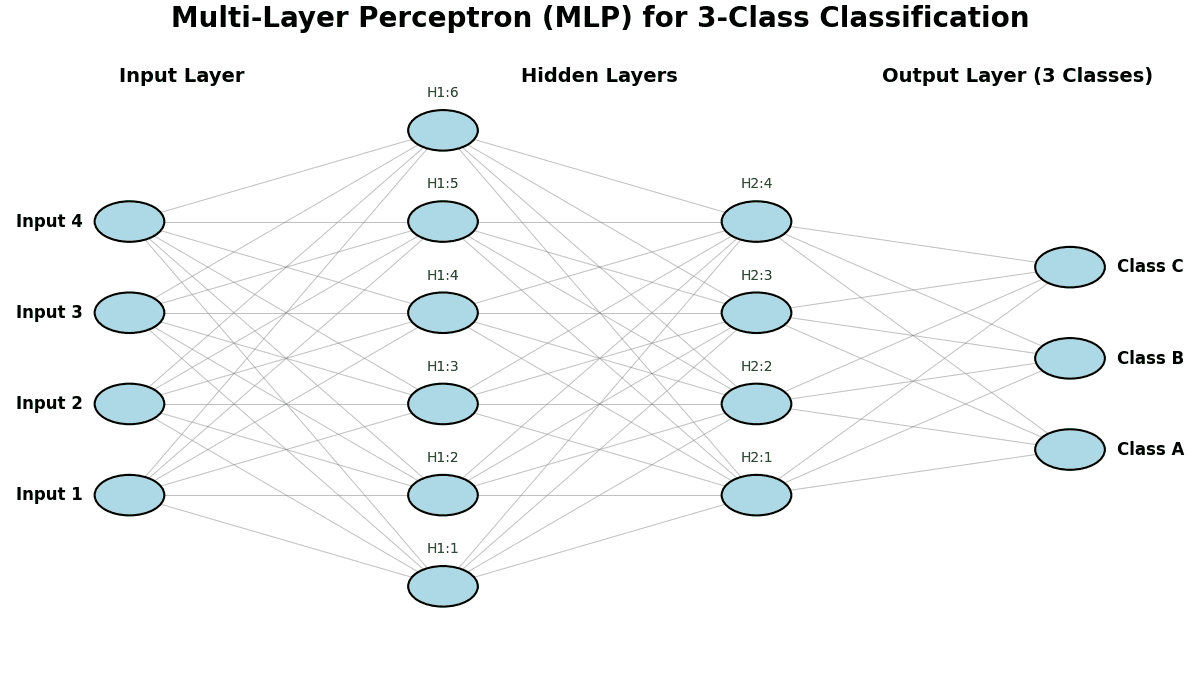

A Multi-Layer Perceptron (MLP) is a feedforward neural network with one or more hidden layers between the input and output layers. Hidden layers in MLP use non-linear activation functions (like ReLU, sigmoid, or tanh) to model complex relationships. MLP can be used for classification, regression, and function approximation. MLP is trained using backpropagation, which adjusts the weights to minimize errors.

Overcoming Limitations:

(1) Learn non-linear: Unlike a single-layer perceptron that can only solve linearly separable problems, an MLP can learn non-linear decision boundaries, handling problems such as the XOR problem.

(2) Universal Approximation: With enough neurons and layers, an MLP can approximate any continuous function, making it a powerful model for various applications.

The plot below illustrates an example of a Multi-Layer Perceptron (MLP) applied to a classification problem.

Leave a Reply