Please explain the process of Forward Propagation.

Answer

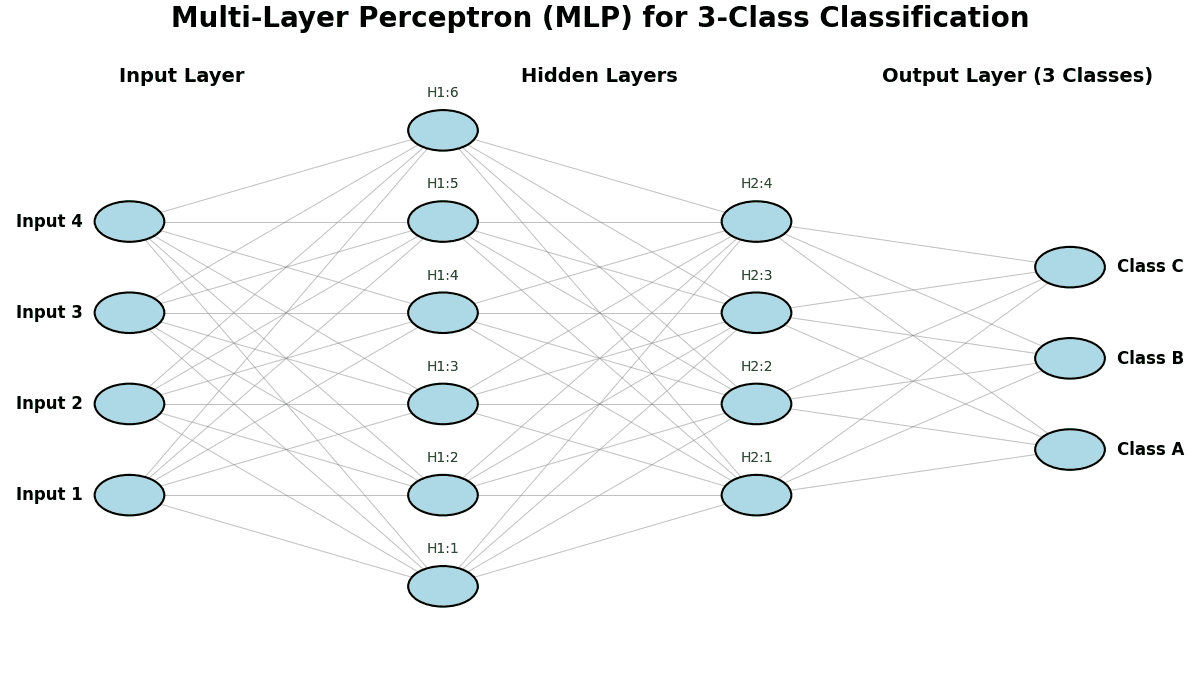

Forward propagation is when a neural network takes an input and generates a prediction. It involves systematically passing the input data through each layer of the network. A weighted sum of the inputs from the previous layer is calculated at each neuron, and then a nonlinear activation function is applied. This process is repeated layer by layer until the data reaches the output layer, where the final prediction is generated.

Here is the process of Forward Propagation:

(1) Input Layer: The network receives the raw input data.

(2) Layer-wise Processing:

Linear Combination: Each neuron calculates a weighted sum of its inputs and adds a bias.

Non-linear Activation: The resulting value is passed through an activation function (e.g., ReLU, sigmoid, tanh) to introduce non-linearity.

(3) Propagation Through Layers: The output from one layer becomes the input for the next layer, progressing through all hidden layers.

(4) Output Generation: The final layer applies a function (like softmax for classification or a linear function for regression) to produce the network’s prediction.