Which activation functions do transformer models use?

Answer

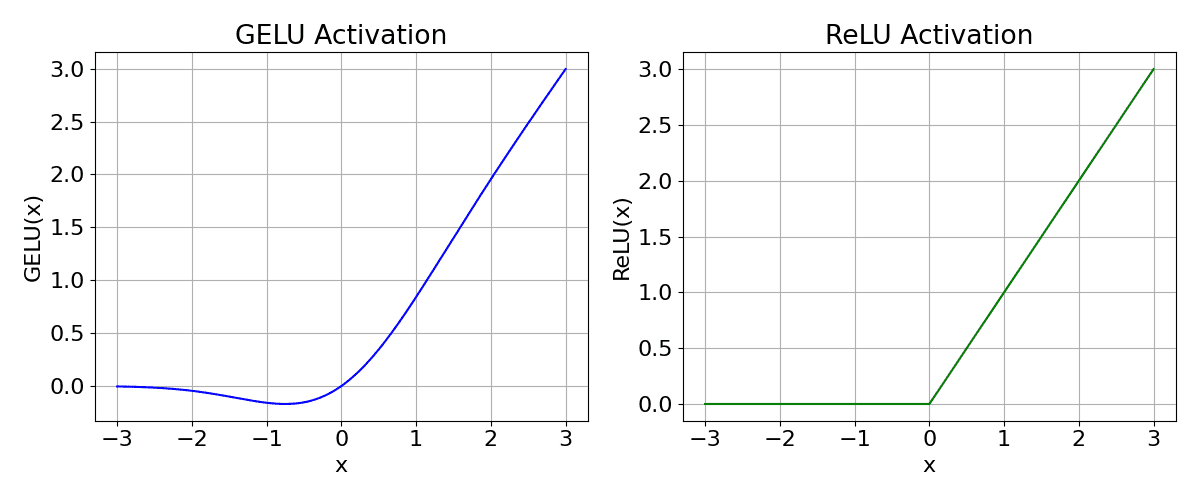

Transformers mainly use GELU/ReLU in the feed-forward layers to introduce non-linearity and Softmax in attention to produce normalized attention weights. GELU is preferred for smoother gradient flow and better performance.

(1) Feed-Forward Network (FFN):

Uses ReLU or GELU as the non-linear activation.

GELU is more common in modern Transformers (like BERT, GPT).

Equation for GELU:

Where: is the input,

is the Cumulative Distribution Function (CDF) of the standard Gaussian.

The figure below demonstrates the difference between ReLU and GELU.

(2) Attention Output:

Uses Softmax to convert attention scores into probabilities.

Equation for Softmax:

Where: represents the raw attention score for the i-th token,

is the total number of tokens considered in attention.

Leave a Reply