Please explain how KNN Regression works.

Answer

K-Nearest Neighbors (KNN) Regression is a simple, instance-based learning algorithm that predicts a continuous output by finding the K nearest data points in the training set and averaging their output values. Its simplicity makes it easy to understand and implement, but its performance can be sensitive to the choice of K and the distance metric. Additionally, it can be computationally expensive during the prediction phase, especially for large datasets, since it requires comparing the query point to all training samples. KNN is often referred to as a “lazy learner” because it doesn’t build an explicit model during training—it simply memorizes the training data.

(1) Instance-based method: KNN doesn’t learn an explicit model; it stores the training data and makes predictions based on similarity.

(2) Distance-based: It finds the K nearest neighbors to a query point using a distance metric (commonly Euclidean distance).

(3) Averaging Neighbors: The predicted value is the average of the target values of these K nearest neighbors.

(4) Sensitive to K and distance metric: The performance depends on the choice of and how distance is measured (Euclidean, Manhattan, etc.).

(5) No training phase: All computation happens during prediction (also called lazy learning).

Below is the equation for the Prediction Calculation in KNN Regression:

Where: is the predicted value for the query point.

represents the target value of the i‑th nearest neighbor.

denotes the number of neighbors considered.

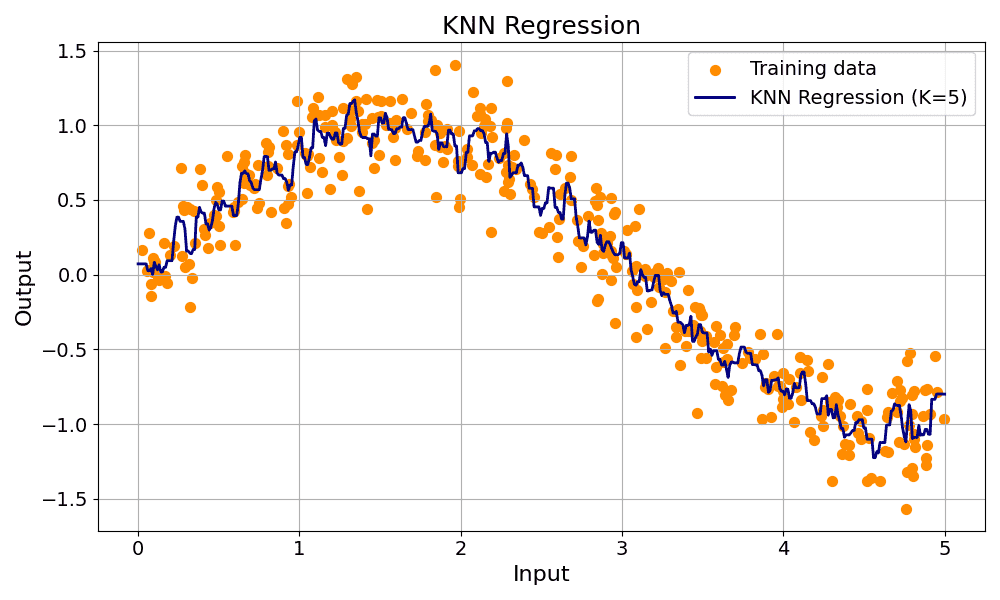

The example below shows KNN for regression.

Leave a Reply