What are the common causes for a deep learning model to output NaN values?

Answer

NaN outputs in deep learning usually stem from unstable math operations, gradient issues, bad hyperparameters, or data problems. Prevent this with proper initialization, proper normalization, stable activation functions, and well-tuned hyperparameters.

Here are the common causes for a deep learning model to output NaN values:

(1) Exploding Gradients: Gradients become excessively large during training, leading to NaN weight updates

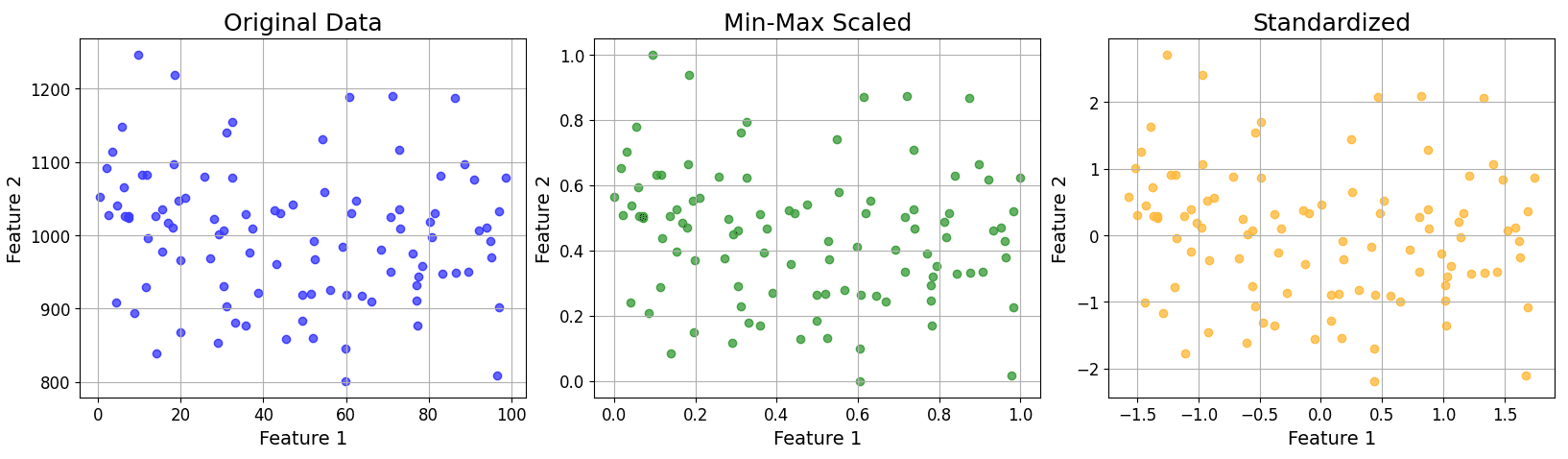

(2) Numerical Instability: Operations like log(0), division by zero, or square roots of negative numbers. Without a small constant (epsilon) in its denominator, batch normalization will suffer from division by zero if a batch has zero variance.

(3) Improper Learning Rate: Too high a learning rate can cause parameter updates to diverge and push model parameters to extreme values.

(4) Incorrect Weight Initialization: Incorrectly initializing all weights to very large positive numbers can cause activations to overflow immediately.

(5) Data Issues: Input data contains NaN or extreme values.